Anthropic didn't want their AI tech used for surveillance on Americans or autonomous weapons stri...

Watch the Original

Engagement Metrics

About the Creator

Senator Mark Kelly (D-Arizona) is a retired U.S. Navy captain and astronaut who serves in the U.S. Senate. He is known for his direct, critical commentary on military and defense policy issues, often challenging administration decisions he views as problematic. Kelly has established credibility in national security matters given his military background and oversight role on defense-related committees.

What's This About?

This post addresses a significant conflict between Anthropic, an AI safety company, and the Trump administration over the use of Claude AI in government systems. Anthropic maintained ethical safeguards prohibiting Claude from being used for mass domestic surveillance of Americans and fully autonomous weapons systems, citing concerns about reliability and democratic values. When Defense Secretary Pete Hegseth demanded unrestricted access, Anthropic refused, leading the Trump administration to threaten the company and subsequently order all federal agencies to cease using Anthropic's Claude AI, with a six-month phase-out for military departments. The dispute highlights tensions between corporate AI safety ethics and government pressure for unfettered technological access.

🔥Why It's Trending

This content is trending due to its relevance to ongoing high-stakes debates about AI governance, corporate autonomy versus government authority, and national security policy under the Trump administration. The post gained visibility as part of broader discussions about AI companies' willingness to resist government pressure, with Senator Kelly's criticism contrasting sharply with supporters who framed Anthropic's position as weakening U.S. AI competitiveness against China. The controversy intersects with multiple trending topics including AI regulation, military technology policy, and constitutional tensions between branches of government.

💡Fun Facts

- 1Anthropic specifically prohibited Claude from being used in systems that conduct large-scale monitoring of U.S. persons on American soil, distinguishing this from foreign surveillance or national security intelligence activities.

- 2Other major AI providers including Google, OpenAI, and xAI reportedly accepted Defense Department contracts with fewer restrictions than Anthropic demanded.

- 3The Pentagon estimated it could take months to replace Anthropic's tools on classified systems, indicating significant integration of Claude into existing military infrastructure.

- 4Anthropic CEO Dario Amodei argued that frontier AI systems are 'simply not reliable enough' for high-stakes applications like autonomous weapons, raising questions about AI safety in military contexts.

- 5The Trump administration imposed a six-month phase-out period for military agencies rather than an immediate ban, suggesting the operational complexity of removing the technology.

📚Read More

← Swipe to see more →

More Trending on Twitter

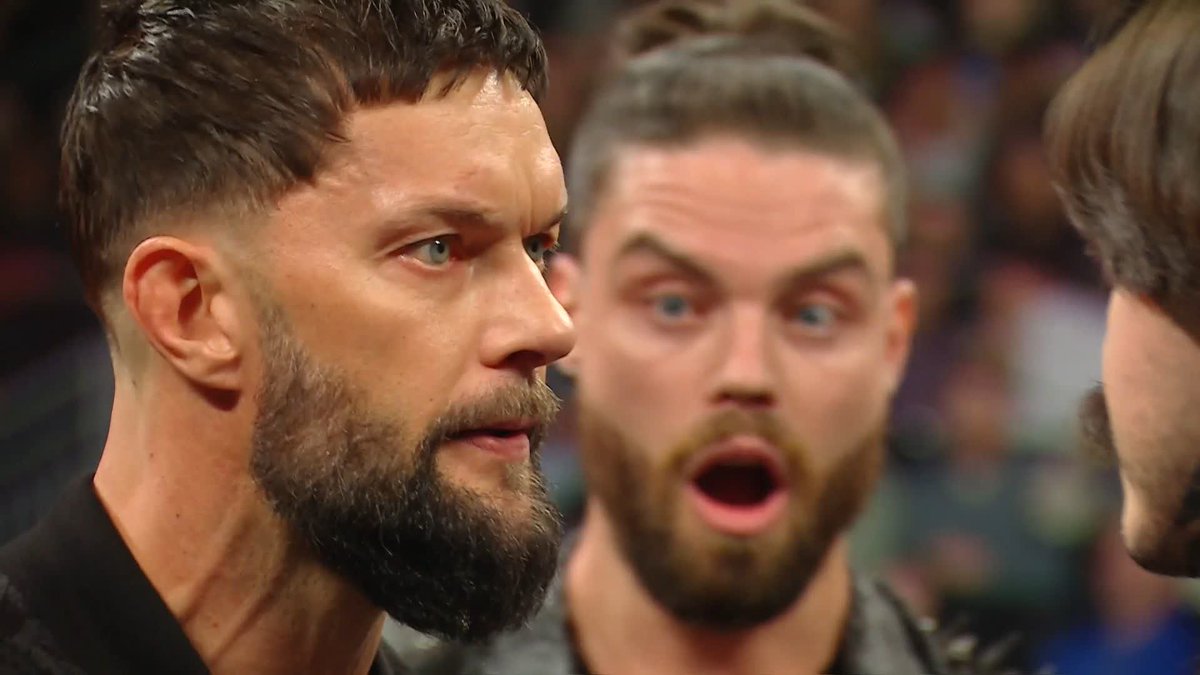

Finn Bálor turned on Dominik Mysterio. …but The Judgment Day ends up turning on Bálor. Bálor is...

Finn Bálor turned on Dominik Mysterio. …but The Judgment Day ends up turning on Bálor. Bálor is officially out of The Judgment Day. #WWERAW https://t.co/mftPqmuDPN

by Wrestle Ops

The masked men just bundling together until Seth Rollins is left in the ring. #WWERAW https://t...

The masked men just bundling together until Seth Rollins is left in the ring. #WWERAW https://t.co/2L3n5lcb7K

by Wrestle Ops

Finn Balor’s time in The Judgment Day is officially over ⚖️ 2022-2026 https://t.co/wT1tAHHQB6

Finn Balor’s time in The Judgment Day is officially over ⚖️ 2022-2026 https://t.co/wT1tAHHQB6

by WrestlingWorldCC